Speed matters — but not at the expense of fairness. As AI systems scale from pilots to production, the decisions we make about bias, accountability, and transparency become not just technical choices but moral ones.

The Challenge of Scale

There is a temptation, when building AI systems, to treat deployment as the finish line. The model performs well in testing. The client is eager. The business case is strong. Ship it.

But AI systems do not operate in labs — they operate in the world, where data is messy, populations are diverse, and consequences are real. A credit scoring model that performs with 95% accuracy overall might still systematically disadvantage a minority demographic. A hiring tool trained on historical data might encode the very biases it was meant to remove. At scale, these errors compound — and the people harmed are rarely the people who built the system.

At PGX, we believe that responsible AI deployment is not a constraint on velocity — it is a prerequisite for building systems that are worth building in the first place.

Our Framework: Five Pillars of Responsible Deployment

Every AI system built and deployed by PGX — whether for internal use or as a client solution — must pass through five governance pillars before going live in production.

-

Transparency Every decision made by an AI system must be explainable. We require all production models to provide human-readable explanations for their outputs — not just a score, but the factors that drove it. If we cannot explain a decision, we do not deploy it.

-

Accountability Human oversight is non-negotiable for high-stakes decisions. Autonomous AI actions are permissible only in well-defined, low-risk contexts. For anything that affects a person's livelihood, safety, or rights, a human must remain in the loop.

-

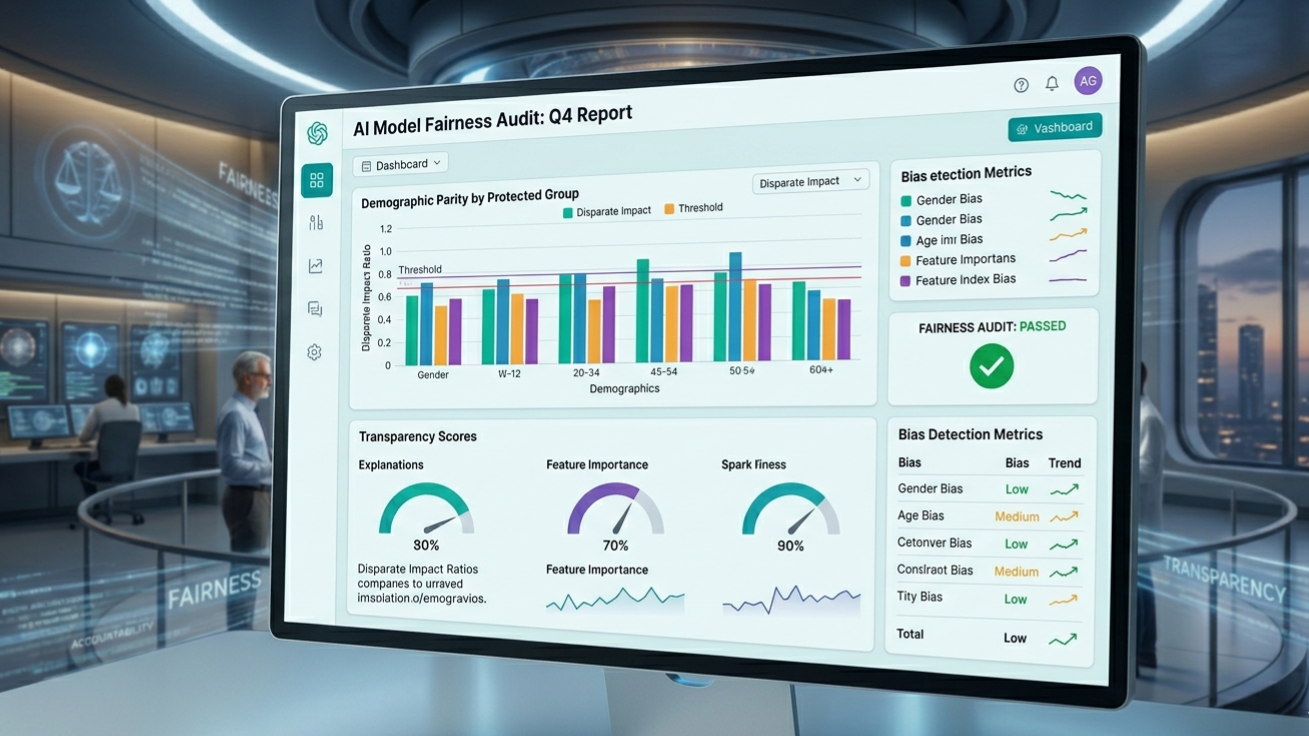

Fairness Bias audits are not a one-time exercise — they are an ongoing obligation. We measure demographic parity, equal opportunity, and individual fairness metrics before deployment and continuously thereafter. When bias is detected, we stop, investigate, and remediate before proceeding.

-

Privacy Data minimization is designed in, not bolted on. We collect only what is necessary, retain only what is required, and delete on schedule. Differential privacy and federated learning techniques are applied wherever feasible to limit individual data exposure.

-

Safety Every model undergoes adversarial testing before deployment — including red-teaming for edge cases, out-of-distribution inputs, and adversarial manipulation attempts. We maintain automated circuit breakers that can take a model offline if real-world performance degrades beyond defined thresholds.

Where We Draw the Line

Not every AI application is one we will build. PGX maintains an explicit list of prohibited use cases — applications we will decline regardless of commercial interest. These include any system designed or reasonably likely to be used for mass surveillance, predictive policing, social scoring, political manipulation, or the targeting of protected groups.

This is not altruism. It is a recognition that the long-term value of AI — and of PGX as a company — depends on public trust. Systems that erode trust erode the entire ecosystem. We choose not to participate in that erosion.

What Good Looks Like in Practice

Responsible AI deployment is not a checklist — it is a culture. At PGX, that culture is built on three concrete practices:

Ethics Review Board

- A cross-functional team — including engineers, product managers, legal counsel, and external advisors — reviews every AI deployment before launch

- Board sign-off is required for any system that makes decisions affecting individuals

- Findings are documented and published internally to build institutional knowledge

Continuous Monitoring & Incident Response

- All production AI systems emit real-time fairness and performance metrics to a centralized observability dashboard

- Automated alerts trigger human review when metrics deviate from established baselines

- A documented incident response playbook ensures rapid, coordinated action when issues arise

90-Day Audit Cycle

- Every production AI model is re-evaluated on a 90-day cycle, regardless of whether issues have been flagged

- Audits include re-running bias checks on current live data, not just the training set

- Models that no longer meet the standard are retrained or retired — not quietly left in place

The Bottom Line

Speed and responsibility are not opposites. The companies that will define the next decade of AI are not those that deploy fastest — they are those that build trust fastest. And trust, once broken by an AI system that discriminates, manipulates, or fails without accountability, takes years to rebuild.

At PGX, we move fast. But we move thoughtfully. And we believe that distinction is precisely what makes our work worth doing.